Who We Are

Measures for Justice is a nonpartisan nonprofit that’s bringing transparency and accountability into the mix of how justice gets pursued in this country.

Measures for Justice is a nonpartisan nonprofit that’s bringing transparency and accountability into the mix of how justice gets pursued in this country.

We envision a world in which the criminal justice system is fully transparent, accessible, and accountable.

We are changing the future of criminal justice by developing tools that help communities, including the institutions that serve them, reshape how the system works.

As an organization, we have a set of values that govern all the choices we make and how we conduct ourselves every day.

We:

We:

We:

We:

Measures for Justice was founded in 2011 to help communities access criminal justice data as the basis for informed decision-making about how to reshape the system.

We’ve spent years developing and refining a process for collecting, standardizing, and publishing criminal justice data.

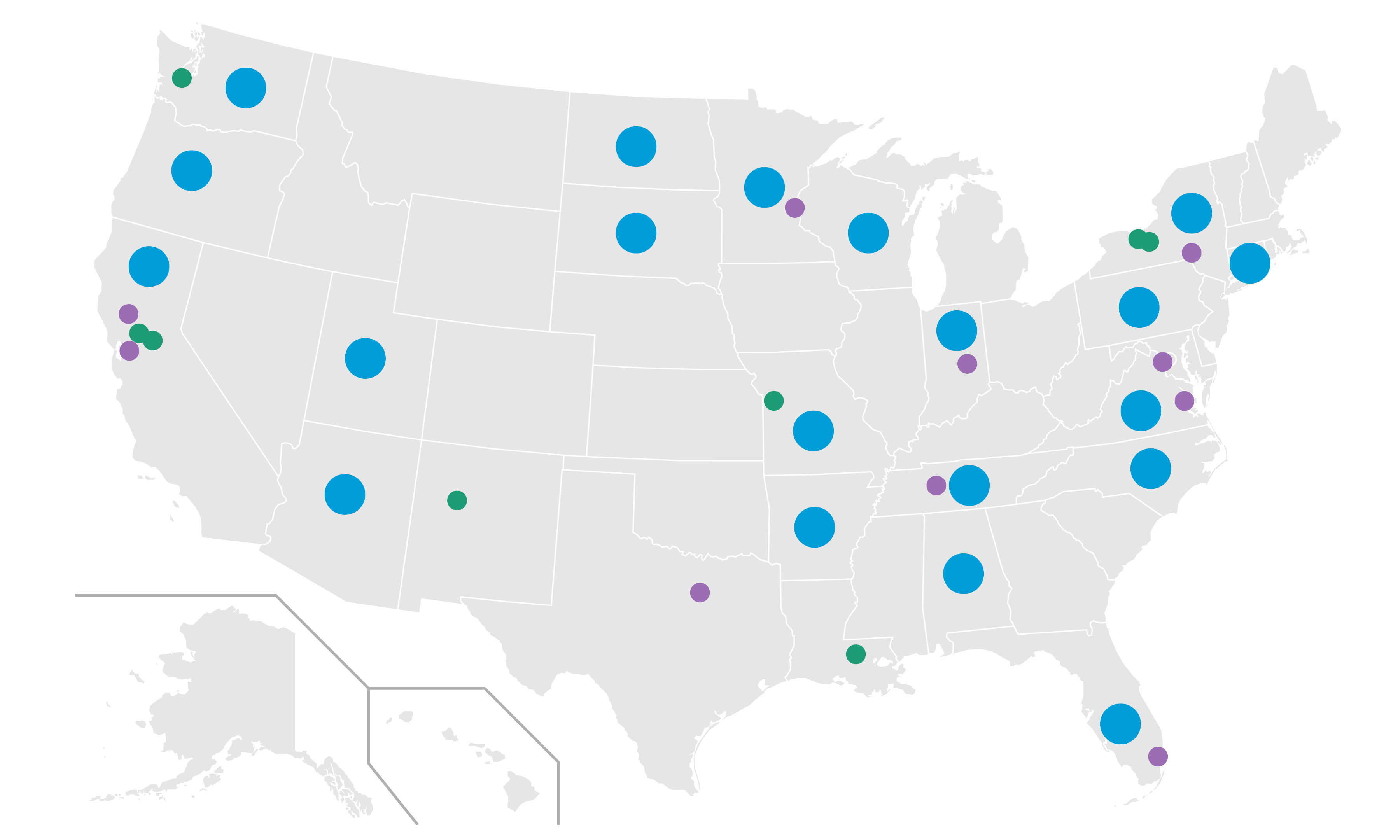

We have developed a detailed methodology to standardize criminal justice data across jurisdictions across the United States.

Our measures provide a comprehensive picture of how cases are being handled across counties from arrest to post-conviction.

Our councils help guide and inform our work. They comprise experts in data, measurement and methods, and policing.

MFJ is a nonpartisan nonprofit headquartered in Rochester, NY. Our team includes experts in community engagement, data engineering, product design, and criminal justice. We partner with multiple organizations to lead the charge towards a criminal justice system that is fully transparent, accountable, and accessible.

The Board is responsible for devising policies and procedures that support best practices for MFJ.

A chance to look back on what we’ve achieved and the people we’ve achieved it with.

Come join the effort to make the criminal justice system transparent, accountable, and accessible.

One of the largest bodies of standardized and coherent county-level criminal justice data in the country.

A community-driven data tool that shapes criminal justice policy.

Tools in use that help agencies gauge and improve the quality of their data in preparation for greater transparency.